Here’s a familiar scene:

It’s December, and your leadership team is reviewing next year’s priorities. The board has asked for an AI strategy. Someone asks:

“Who’s going to own this?”

Eyes move around the room. No one is quite sure. Finally, someone is tapped to “lead AI” without a clear definition of what that means.

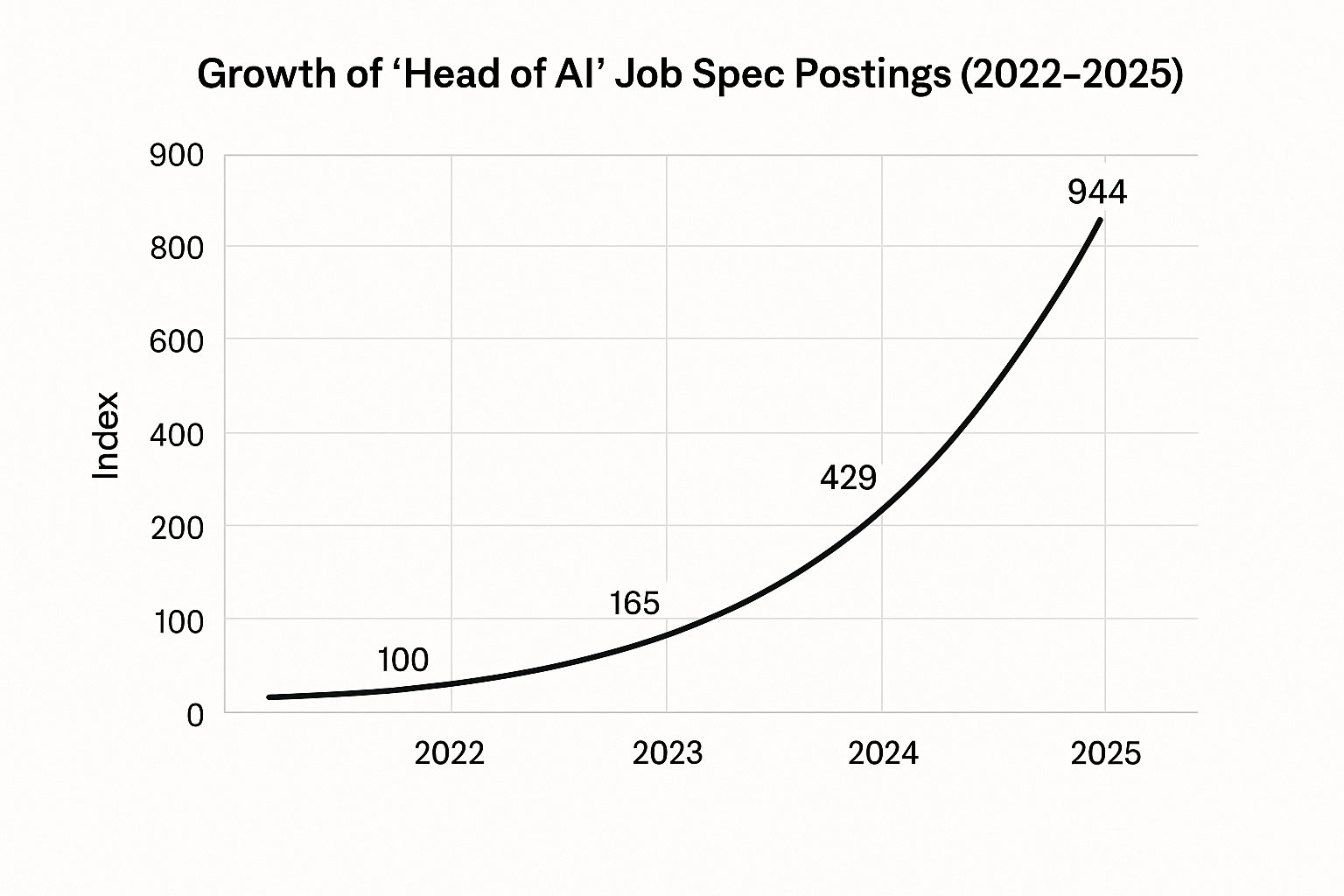

AI is moving faster than org charts. Three years ago, “Head of AI” wasn’t a role. Today, it’s one of the most sought-after seats in the executive team.

So today we’re asking:

- Why did this role explode so quickly?

- What does a Head of AI actually do?

- And how do you know you’re qualified for the job?

Why do we need a Head of AI?

The emergence of this role in organizations is driven by three shifts:

- AI moved from experimentation to economics.

Decisions around customer support, search, operations, underwriting, and fraud now directly affect revenue and cost. - Boards want AI accountability.

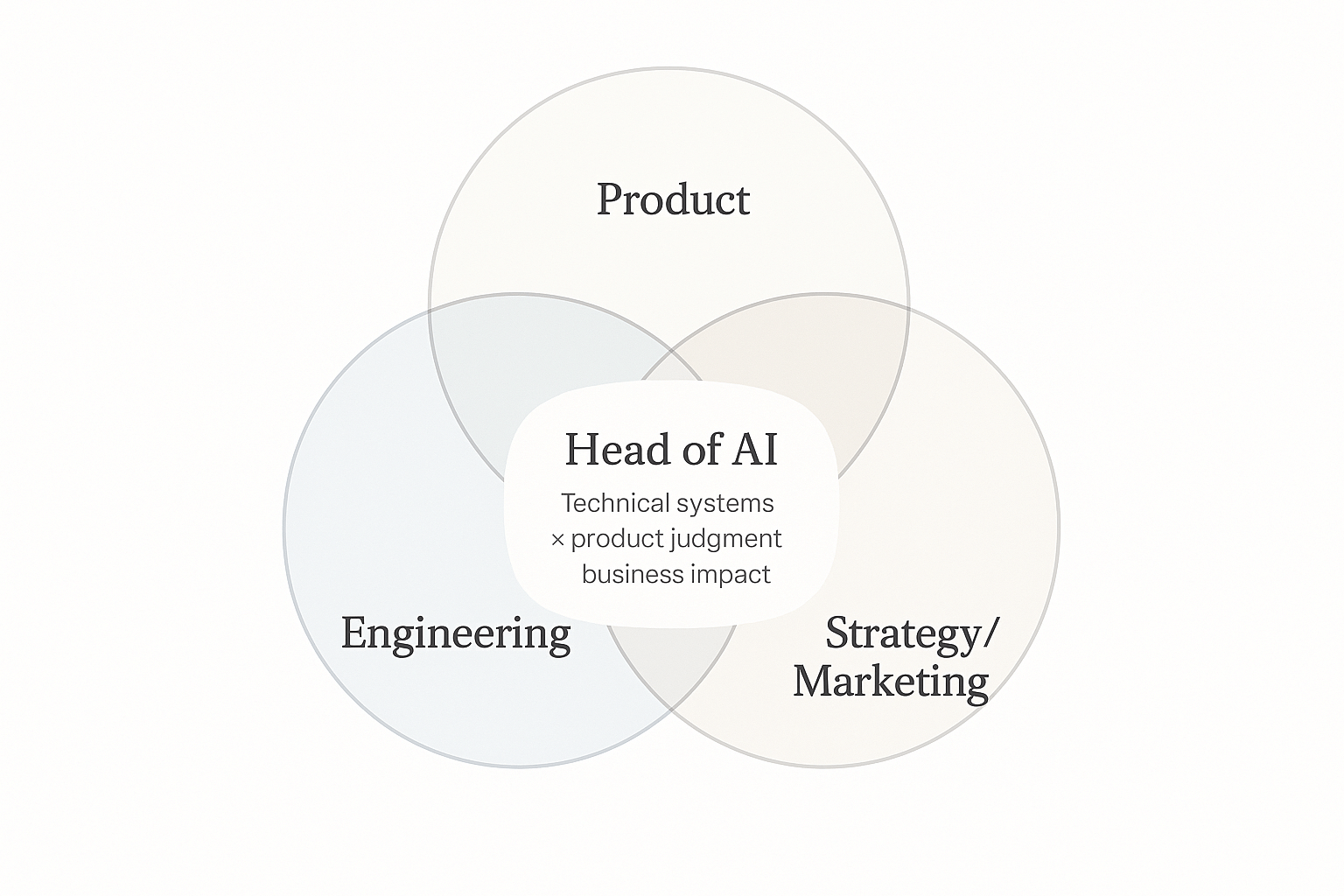

“Innovation” slides are no longer enough. Leadership wants clarity, risk management, and measurable outcomes. - AI sits between functions.

Engineering, data, product, risk, and compliance all intersect — yet no single function historically owned that intersection. The Head of AI fills that gap.

Why does everyone want the job? The Head of AI sits at the crossroads of strategy, engineering, and the company’s future, often acting as the spokesperson for innovation. Who wouldn’t want that visibility?

Even more exciting, there’s no rigid career path to becoming Head of AI: Leaders from engineering, product, data, marketing, and strategy can all credibly grow into it.

So, what does a Head of AI do?

Let's set aside the mythologized image of someone stepping onto a keynote stage, backed by a rock anthem. At The Agile Monkeys, we define the role through these core responsibilities:

Turn AI into a clear business strategy.

Explain how AI shifts the economics of the business, the risks it introduces, and why certain projects matter more than others. This often looks like identifying a high-volume workflow where automation can cut cycle time dramatically without compromising oversight.

Give AI the right information to work with.

Decide what knowledge the system should use, how to structure and chunk it, and when to refresh it — replacing static, outdated documents with versioned, governed enterprise memory that prevents drift and hallucinations.

Make smart model and cost decisions.

Balance ROI, latency, and accuracy, and design systems that can scale — a $50/month prototype might grow into a $50K/month production system. Sometimes that means switching to a smaller model for faster latency and lower cost.

Build AI as a reliable platform, not scattered experiments.

Lead the culture shift into building shared foundations the whole company uses — centralizing retrieval, evals, logging, and routing — so every new project stops reinventing the basics and the organization shifts from deterministic outputs to probabilistic reliability.

Measure, debug, and explain how AI works.

Define what “good” looks like (groundedness, correctness, contextual fit) and diagnose whether failures come from data, routing, or model behavior. Make the system’s reasoning visible so both technical teams and executives stay aligned.

Notice what's not on this list… You don't need to have trained frontier models. You don't need to be the strongest coder. You don't need to know every LLM.

The real test is: Can you lead an AI project end-to-end and avoid major mistakes?

Five moves to take the Head of AI spot

Itching to step into the role? Don’t wait for permission. The people who end up in Head of AI roles started doing the work before they had the title.

Here are the five steps that consistently move leaders into the seat:

1. Own One Real Pilot End-to-End

Find a real problem and propose a small, scoped AI pilot. Own it from idea to deployment. Ship it — and pay attention to where it breaks. Early failures teach more than polished demos, especially about reliability and operational constraints.

2. Build Pattern Recognition by Shipping

Ship small projects, even if they’re internal tools or scrappy prototypes. This is where latency issues, edge cases, and strange behaviors show up. Reading and watching talks helps, but pattern recognition comes from seeing systems behave in the wild.

3. Evaluate Ruthlessly and Stay Close to Reality

Run the same prompt multiple times. Try to break your own system. Build evaluations specific to your workflow, not generic benchmarks. Stay close enough to the work to understand what’s feasible today versus what only sounds good in meetings.

4. Tell the Story of Impact (With a Bit of Vulnerability)

Present outcomes clearly: show how decisions changed workflows, reduced cost, or improved speed. Then share openly — internally or on LinkedIn — what worked, what didn’t, and why one approach was chosen over another. Leaders who reveal their reasoning build credibility quickly.

5. Learn from Leaders + Embedded Partners

Read post-mortems and talk to current Heads of AI to understand how projects fail and what they’d do differently. Then go one step further: bring in partners who embed with your team so you can see those decisions made in real time—architecture tradeoffs, what gets shipped, what gets cut.

That’s how we work with clients: we join your standups, ship code with your engineers, and make the hard calls together.